Volterra series

The Volterra series is a model for non-linear behavior similar to the Taylor series. It differs from the Taylor series in its ability to capture 'memory' effects. The Taylor series can be used to approximate the response of a nonlinear system to a given input if the output of this system depends strictly on the input at that particular time. In the Volterra series the output of the nonlinear system depends on the input to the system at all other times. This provides the ability to capture the 'memory' effect of devices such as capacitors and inductors.

It has been applied in the fields of medicine (biomedical engineering) and biology, especially neuroscience. It is also used in electrical engineering to model intermodulation distortion in many devices including power amplifiers and frequency mixers. Its main advantage lies in its generality: it can represent a wide range of systems. It is therefore sometimes referred to as a non-parametric model.

In mathematics, a Volterra series denotes a functional expansion of a dynamic, nonlinear, time-invariant functional. Volterra series are frequently used in system identification. The Volterra series, which is used to prove the Volterra theorem, is a series of infinite sum of multidimensional convolutional integrals.

Contents |

History

Volterra series is a modernized version of the theory of analytic functionals due to the Italian mathematician Vito Volterra in work dating from 1887. Norbert Wiener became interested in this theory in the 1920's from contact with Volterra's student Paul Lévy. He applied his theory of the Brownian motion to the integration of Volterra analytic functionals. The use of Volterra series for system analysis originated from a restricted 1942 wartime report [1]of Wiener, then professor of mathematics at MIT. It used the series to make an approximate analysis of the effect of radar noise in a nonlinear receiver circuit. The report became public after the war.[2] As a general method of analysis of nonlinear systems, Volterra series came into use after about 1957 as the result of a series of reports, at first privately circulated, from MIT and elsewhere.[3] The name Volterra series came into use a few years later.

Mathematical theory

The theory of Volterra series can be viewed from two different perspectives: either one considers an operator mapping between two real (or complex) function spaces or a functional mapping from a real (or complex) function space into the real (or complex) numbers. The latter, functional perspective is in more frequent use, due to the assumed time-invariance of the system.

Continuous time

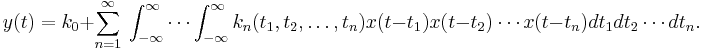

A continuous time-invariant system with x(t) as input and y(t) as output can be expanded in Volterra series as:

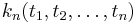

is called the n-th order Volterra kernel which can be regarded as a higher-order impulse response of the system. Sometimes the nth order term is divided by n!, a convention which is convenient when considering the combination of Volterra systems by placing one after the other ('cascading').

is called the n-th order Volterra kernel which can be regarded as a higher-order impulse response of the system. Sometimes the nth order term is divided by n!, a convention which is convenient when considering the combination of Volterra systems by placing one after the other ('cascading').

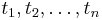

The causality condition: Since in any physically realizable system the output can only depend on previous values of the input, the kernels  will be zero if any of the variables

will be zero if any of the variables  are negative. The integrals may then be written over the half range from zero to infinity.

are negative. The integrals may then be written over the half range from zero to infinity.

Discrete time

Let F be a continuous functional system, which is time-invariant and has finite memory. Then Fréchet's theorem states, that this system can be approximated uniformly and to an arbitrary degree of precision by a sufficiently high, but finite order Volterra series. The input set over which this approximation holds encompasses all equicontinuous, uniformly bounded functions. In physically realizable setting this constraint on the input set should always hold.

Methods to estimate the Kernel coefficients

Estimating the Volterra coefficients individually is complicated since the basis functionals of the Volterra series are correlated. This leads to the problem of simultaneously solving a set of integral-equations for the coefficients. Hence, estimation of Volterra coefficients is generally performed by estimating the coefficients of an orthogonalized series, e.g. the Wiener series, and then recomputing the coefficients of the original Volterra series. The Volterra series main appeal over the orthogonalized series lies in its intuitive, canonical structure, i.e. all interactions of the input have one fixed degree. The orthogonalized basis functionals will generally be quite complicated.

An important aspect, with respect to which the following methods differ is whether the orthogonalization of the basis functionals is to be performed over the idealized specification of the input signal (e.g. gaussian, white noise) or over the actual realization of the input (i.e. the pseudo-random, bounded, almost-white version of gaussian white noise, or any other stimulus). The latter methods, despite their lack of mathematical elegance, have been shown to be more flexible (as arbitrary inputs can be easily accommodated) and precise (due to the effect that the idealized version of the input signal is not always realizable).

Crosscorrelation method

This method, developed by Lee & Schetzen, orthogonalizes with respect to the actual mathematical description of the signal, i.e. the projection onto the new basis functionals is based on the knowledge of the moments of the random signal.

Exact orthogonal algorithm

This method and its more efficient version (Fast Orthogonal Algorithm) were invented by Korenberg. In this method the orthogonalization is performed empirically over the actual input. It has been shown to perform more precisely than the Crosscorrelation method. Another advantage is that arbitrary inputs can be used for the orthogonalization and that fewer data-points suffice to reach a desired level of accuracy. Also, estimation can be performed incrementally until some criterion is fulfilled.

Linear regression

Linear regression is a standard tool from linear analysis. Hence, one of its main advantages is the widespread existence of standard tools for solving linear regressions efficiently. It has some educational value, since it highlights the basic property of Volterra series: linear combination of non-linear basis-functionals. For estimation the order of the original should be known, since the volterra basis-functionals are not orthogonal and estimation can thus not be performed incrementally.

Kernel method

This method was invented by Franz & Schölkopf and is based on statistical learning theory. Consequently, this approach is also based on minimizing the empirical error (often called empirical risk minimization). Franz and Schölkopf proposed that the kernel method could essentially replace the Volterra series representation, although noting that the latter is more intuitive.

Differential sampling

This method was developed by van Hemmen and coworkers and utilizes Dirac delta functions to sample the Volterra coefficients.

See also

References

- ^ Wiener N: Response of a nonlinear system to noise. Radiation Lab MIT 1942, restricted. report V-16, no 129 (112 pp). Declassified Jul 1946, Published as rep. no. PB-1-58087, U.S. Dept. Commerce.

- ^ Ikehara S: A method of Wiener in a nonlinear circuit. MIT Dec 10 1951, tech. rep. no 217, Res. Lab. Electron.

- ^ Early MIT reports by Brilliant, Zames, George, Hause, Chesler can be found on dspace.mit.edu.

- Schetzen M: The Volterra and Wiener Theories of Nonlinear Systems, (1980)

- Rugh W J: Nonlinear System Theory: The Volterra-Wiener Approach. Baltimore 1981 (Johns Hopkins Univ Press) Many online versions, e.g. www.ece.jhu.edu/~rugh/volterra/book.pdf

- Kuo Y L: Frequency-domain analysis of weakly nonlinear networks, IEEE Trans. Circuits & Systems, vol.CS-11(4) Aug 1977; vol.CS-11(5) Oct 1977 2-6.

- Korenberg M.J. Hunter I.W: The Identification of Nonlinear Biological Systems: Volterra Kernel Approaches, Annals Biomedical Engineering (1996), Volume 24, Number 2.

- Bussgang, J.J.; Ehrman, L.; Graham, J.W: Analysis of nonlinear systems with multiple inputs, Proc. IEEE, vol.62, no.8, pp.1088-1119, Aug. 1974

- Barrett J.F: Bibliography of Volterra series, Hermite functional expansions, and related subjects. Dept. Electr. Engrg, Univ.Tech. Eindhoven, NL 1977, T-H report 77-E-71. (Chronological listing of early papers to 1977) URL: http://alexandria.tue.nl/extra1/erap/publichtml/7704263.pdf

- Giannakis G.B & Serpendin E: A bibliography on nonlinear system identification. Signal Processing, 81 2001 533-580. (Alphabetic listing to 2001) www.elsevier.nl/locate/sigpro